If this isn’t a handy list of shortcuts to useful shell folders in windows, I don’t know what is.

Shell Commands to Access the Special Folders in Windows 7/Vista/XP – The Winhelponline Blog.

If this isn’t a handy list of shortcuts to useful shell folders in windows, I don’t know what is.

Shell Commands to Access the Special Folders in Windows 7/Vista/XP – The Winhelponline Blog.

Three days of screwing around and as far as I can tell I’ve successfully moved all my data from one array to another while keeping the machine and data online the whole time–other than the few minutes of reboots to remove and replace hardware. Not bad for a SOHO file & web devel server.

After the move was completed, and the array was re-syncing I expanded the LVM logical volume and the file system inside of it. Expanding the LV was simple and fast using lvextend (8) and the following command…

root@host:# lvextend data /dev/md#

It should take a couple of seconds while it allocates the new extents and returns and that’s done.

Expanding the ext4 FS takes a bit longer, but as long as it’s being extended and not contracted, it can be done while the FS is online and mounted.

root@host:# resize2fs /file/system/mount/point

Adding the -P to resize2fs (8) would be handy, if it works I didn’t try it, adding progress information as the resize is done.

Rebooting to remove the old, now archival, hard drive and bringing the system back up raised an interesting head scratcher. The MD device that should have come up as MD0, came up as MD127. The /etc/mdadm.conf file looked correct, but it wasn’t putting the device where it should have been in /dev. The fix seems to be rebuilding the intiramfs…

root@host:# update-initramfs -u

With that done and a reboot the md device shows up as md0 like it’s suppose to.

Additionally md apparently now supports assigning arrays descriptive names, like "hostname:1" and the array shows up under /dev/md/ as that name in addition to the regular /dev/md* device.

I went with these drives based on this post on the Backblaze blog. Well, with the caveat that I’m still leery of 3TB drives, so I’m using 2TB drives. I don’t even think that the board or the SATA controllers I have in this box support >2TB drives (though the update later this month will).

More interestingly, the Hitachi 5k3000s are 512-byte sector drives, not 4K sector “advance format” drives. While the 4K sectors do have advantages on large drives in terms of insuring data integrity they also become somewhat fun trying to partition and align around. Partitions have to aligned to 4K boundaries (fdisk, as well as Windows’ partition tools, align to 1M (2048- 512-byte sectors) when DOS compatibility mode is disabled), on top of that you have to be careful where the MD device places the metadata information, as that can shift the FS alignment as well. And for that matter, I have no idea what kind of overhead LVM adds in terms of alignment. In short, 4K drives are, IMO, still something of a mess, and probably will be fore sometime.

One nice thing is I’m seeing about 2x the performance of these Hitachi drives than I was with WD Greens, even though I believe they were properly aligned. Benchmarks show the drives I have can do 140MB/s on the other tracks, I don’t get that yet, but I’m hopeful that a new system with a faster CPU (Xeon E3-1220) and more modern SATA controllers (not the ancient SiI3114 on this Tyan Thunder K8W) will get me closer to that.

Who knew LVM would be good for something. Well, maybe, I’ll know for sure sometime tomorrow, or late tonight, if it works it’ll be great, if it doesn’t. I’ll be damn glad I backed up these drives.

Yeah, so back to LVM. I always wondered if creating an LVM volume over the top of an MD raid volume was a good idea or if it wasn’t just adding extra overhead. And EXT4 partition can be extended without the help of LVM and so can an MD raid device. So why add the extra layer in there.

pvmove

That’s why.

Wanting to avoid the “blow it away and restore from backup” strategy, especially since WD Caviar Greens are so damn slow compared to just about everything else, I decided the best course of action would be to split the existing unresizable md array and create a new second one. Something like….

mdadm /dev/md0 --fail /dev/sdb1 mdadm /dev/md0 --remove /dev/sdb1 mdadm --create /dev/md1 --level=1 --raid-devices /dev/sdb1 missing

The end result, 2 degraded but fully functional md arrays. One still hosting the data volume group with my home logical volume, and one with a big empty disk.

The trick now is to move the data.

The question of how to move the data stumped me for a bit. I could create a new volume group (VG), or at least a new logical volume in the same data VG I already had, format it, and rsync the data across. Of course then I would have to edit at least my /etc/fstab and to get things pointed to the right place. The alternative that came up as I was digging though the LVM documentation is a nifty function called pvmove (8) that will move the physical extents of an LVM from one physical drive to another in a volume group (or to multiple drives in a volume group if needed). Moreover, as best as I can interpret the docs, it does this in a way that’s safe to do with the system online.

All told, for my system, the process looked something like this…

vgextend data /dev/md1 pvmove /dev/md0 /dev/md1

Now it’s back to the waiting game. It’ll be 5 or 6 hours before the pvmove is complete, then I have to tear down the md0 raid array and add the /dev/sdd device that’s left in md0 to md1. That will necessitate a 6, or so, hour re-sync. After which, I’ll reboot, make sure md1 becomes md0 and everything is found properly. Then it should hopefully be a short task of expanding the logical volume from 1.5TB to 2TB and then the EXT4 file-system inside of it. If not, well I’ll be damn glad I made that 6-hour long backup, wont I?

Normally the complexity of doing something in Linux doesn’t bother me. Arcane and convoluted commands don’t scare me, they never really have; they just take some getting use to. The problem I have is when the command, or the underlying system is only half implemented.

My current project has been replacing a pair of 1.5TB WD Caviar Greens with 2TB Hitachi 5k3000s. Yes I see the irony in replacing WD drives with drives made by a company that just sold their drive division to WD. On the up side 500GB more space nets me enough space to backup the rest of the computers on the network and still have as much free space as I had before, which was running down anyway; oh and the Hitachi’s are faster too.

Replacing the drives in the RAID array has gone smoothly enough using the following procedure:

mdadm /dev/md0 --fail /dev/sdX#mdadm /dev/md0 --remove /dev/sdX#mdadm /dev/md0 --add /dev/sdX#I’ve done this for 2 1.5TB Greens, one that was failing and one that’s now going to become a proper backup target.

Now that I have two 2TB drives in there, I want to use them, and that means extending the md group to the full size of the array. So far as I can tell, that should be a simple…

mdadm -G /dev/md0 --size=max

…but, apparently that’s not the case if the array is configured as RAID10. RAID10, which gives the performance of RAID0 with redundancy of being able to lose a disk, which IMO is perfect for slow 5K RPM disks. MD even has a nice feature where the RAID10 array can be created in a partial 2-disk configuration then extended to the full 4+ disk configuration later. In the “partial” mode, it behaves exactly like a RAID-1 array.

Which brings me to the meat of this rant. I can re-size a RAID1 array, I can convert a RAID 1 array to 5, 6, or even 0. However, mdadm can’t re-size a RAID10 array, even if it’s running in what amounts to RAID1 mode, or convert it to RAID1 or any other RAID level for that matter.

Sigh…

Now it’s off to back up the damn thing, kill it rebuild it, and restore everything…. At least I’ll know if my backup procedure works.

I’ve been fighting with this for quite sometime. I moved to a VPS over a year ago in hopes of a more stable Dreamhost experience, and for a while it was. Then about 9 months ago my site started crashing in out of the blue. I’d be chugging along just fine then, “blam!”, site down. I started aggressively caching things with WP Super Cache, then W3 Total Cache. It helped a little, but ultimately things just got more and more unstable. About 2 months ago I gave up on using PhpMyAdmin when I needed to do SQL stuff, simply because it was an instacrash for my VPS. About 3 weeks ago, I had enough and decided it was time to seriously track down the problem.

To make a long story short, Wordpress, is a massive memory hog. I’m pushing on average 30MB before I even start loading plugins. That’s not a lot if you have a 8GB server dedicated to nothing but pushing wordpress but, 30MB is 5-10% of a small VPS. I’ve gone though all the Wordpress tuning guides I can find. I’ve manually cleaned up the database. Nothing really helps. Of course if the server was configured for the load it has, the problem would be considerably smaller.

Which brings us back to Dreamhost’s VPS. They say it’s designed to scale with the RAM that’s allocated to it. Sure, maybe if you’re running static HTML pages. In which case the 69 concurrent clients configured on a 400MB server would get ~6MB a piece which is just barely enough for Apache to serve static HTML. Even then it doesn’t really work out, since there’s non Apache overhead. In fact, now that I think about it, by default under full load, Apache is configured in such a way that it can easily exceed a VPS’s memory allotment just serving static content. 😮

Then comes mod_fcgi. By default it’s configured to allow 20 instances per process class (I’ll come back to this), and the Apache default is 1000.

What are process classes and why are they important. Process classes are spawned by the same executable and share a common virtual host and identity. For example, if my virtual host for cult-of-tech spawns a CGI process, that process can’t be used by another virtual host on my server. Now here’s the kicker. When wordpress gets going and everything is loaded, that 30MB+ of Wordpress and all the PHP overhead + whatever space you allot for caching (XCacahe, APC) is how big the fcgi istance will be. In my case, that means each php.cgi instance is 60-70MB. On a 400MB server, that means once you spawn 5-6 php processes you’ve used up the entirety of your VPS’s memory and, again, blam!

The kicker though, is Dreamhost’s overly aggressive memory manager on their VPSes. Instead of killing off processes, or for that matter special casing it and just restarting Apache if it’s running, the watchdog merely kills off the VPS. Well it may do more, because it can take 10 minutes for the VPS to come back up unless you manually reboot it.

Interestingly enough the answer to all of this is not to simply throw money at it. In the process of troubleshooting this I temporary pushed my memory limits up, and even at 600MB or 800MB the config would still allow enough processes to cause the server to crash. For that matter my development server, which has a ton of RAM available, can comfortably do many of the things that was causing my VPS to crash, without exceeding a 200MB memory foot print.

Simply put, there’s no reason a lightly trafficked Wordpress site should require more than 300MB, maybe 400MB, but certainly not 600MB to simply stay upright. At least not with a properly configured server behind it.

The moral of the story is:

And what am I doing about this?

I’ve curtailed my Apache and mod_fcgi configs to more reasonable settings.

I’ve set mod_fcgi’s MaxProcesses directive to floor( (400 - typical_process_size) / typical_process size) and my Apache MaxClients to floor(((typ-cgi-process size * 2) - 20) /5). I won’t be anymore specific than that, because what will actually work while still being performant, varies based on site, software, traffic, caching, and number of virtual hosts.

I don’t use the registry editor all that much, but when I do, I’m almost always going to some specific spot not browsing around the tree. I should be able to type HKLM\blah\blah\blah and have the registry editor open up to that part of the hive right away. Need I say more?

I find it handy, especially when developing and tuning temples and plugins to, to get some info about how the page performed.

Previous to Wordpress 3, I’d define a constant in the wp-settings.php file on my development server and then have my theme check to see if that was set and if it was, insert a small fixed position div that contained memory, DB query, and page render statistics. It worked, but it wasn’t real elegant.

Wordpress 3 introduced the concept of the admin bar. Available to all logged in users, the admin bar does provide a continent way to handle and display some simple page stats.

Currently the plugin displays 3 stats, max memory used, number of DB queries reported by $wpdb, and page render time and will appear for all logged in users.

Download: cot-admin-bar-stats.zip

When it comes to software providing a feature or function that’s broken is worse than not providing the feature at all.

Let me weave the story of broken functionality from this weekend.

Google’s Apps for Domains provides 2 ways to reset your administrative password, either you have an alternative address configured and a check-box checked allowing Apps for Domains to reset your password using that address. Alternatively, if you missed that configuration option you can go though an alternative process that involves creating a DNS record with a value provided by Google to verify that you own the domain.

From what I’ve gathered, the functionality is suppose to work like this; you create a CNAME record with a value provided by Google and point that at google.com. Google’s system is then supposed to look up that CNAME record and verify that it points to google’s domain. If it does, the instructions for resetting your password are sent to the email address you provide when you go though the recovery process.

The problem is, the system is broken right now.

What should happen, and what’s actually happened are two different things. In reality I’ve received 2 automated responses form Google indicating that the system couldn’t verify the CNAME record. After searching the Google Apps support forums it turns out that the CNAME method is currently broken and that Google is aware of the issue but hasn’t bothered updating anything on their site to note that or temporarily disable the function.

Since the functionality was present and failed in the same way one would expect it to fail if you had simply configured something wrong the result is spinning your wheels with no results. If Google had disabled the functionality or provided a link to their support request system in the resulting email, I could have at least opened a ticket after the first failure instead of going though the process again double checking everything.

the least they could have done was provided a link to their support ticketing system. The worst possible thing to do to a customer is make it look like their spinning their wheels. In this case at a minimum the auto-reply email for a failure should include a way to open a ticket on the issue instead of just sending you back to the same process with a generic error.

The moral? If you’re a developer and you realize that some functionality you’re providing isn’t functional, disable it until you’ve fixed it. If that means you have to deal with more support tickets for a while so be it. This is even more important in a customer service situation like resetting a password.

Needless to say, if you use Google Apps for Domains and your administrator account doesn’t have a secondary email address that can be used for resetting the password, set that up post haste. On top of that, it might not be a bad idea to create a second administrator account with a long random character password that’s stored in a safe place. With a second admin you could use that to log in and reset the primary admin account’s password as well.

Windows 7 never ceases to amaze me. I recently brought up a Win 7 Home pro box using, some pretty archaic by modern standards hardware. While not the most stellar performer, it does surf the web well enough and considering that was it’s intended mission I’d say it’s been successful.

Actual specs are:

I was utterly impress that not only did everything work, but everything was detected and worked right out of the box. Even installing to the SATA controller which was always a problem for Windows XP. Though I guess I really shouldn’t be so surprised by 9 years of OS development.

None the less, the real concern was performance. Of which the machine scores a blister, okay not really, 3.2 on the Windows Performance index. The limiting factor actually being the CPU.

I don’t know what I found to be more surprising, the fact that all the old hardware worked under the new OS or that the system is just as usable with more features and better security as XP SP3 was on the same hardware.

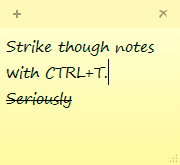

One of my favorite features in Windows 7 (I skipped vista so it may apply there too) is Sticky Notes, the virtual version of their 3M counterparts. I use them mostly the same way too, which means sometimes I want to strike out a completed task instead of simply deleting it from the note or deleting the note.

One of my favorite features in Windows 7 (I skipped vista so it may apply there too) is Sticky Notes, the virtual version of their 3M counterparts. I use them mostly the same way too, which means sometimes I want to strike out a completed task instead of simply deleting it from the note or deleting the note.

The solution to the quandary came from a fellow on twitter. Who knew twitter could be helpful?

In a sticky note, select the text you want to strike out and and press CTRL+T. Bam! Stuck out text and you don’t need a tablet and pen to draw a line though it.